Maximize Swift Apps with OpenAI API Function Calls

Enhance your iOS apps with OpenAI API function calls. Master integrating advanced AI chat functions in Swift for dynamic interactions and smarter user experiences.

Introduction

Function calls elevate the dynamism of your app's interaction with the AI model, letting it directly execute predefined actions. Whether it’s fetching weather data, processing payments, or generating tailored recommendations, the seamless integration of these actions within a conversational interface enhances user engagement and utility.

This guide will take you through the step-by-step process of incorporating function calls in a Swift application using Alamofire, a widely-used Swift-based HTTP networking library. Let's embark on this journey to merge OpenAI's sophisticated AI capabilities with Swift, enriching your app with intelligent conversational functions.

Setting Up the OpenAIParameters Structure

Central to interacting with the OpenAI API is the creation of a well-defined data structure that encapsulates all the necessary parameters for making a request. This is where the `OpenAIParameters` struct comes into play. It represents the request body and outlines the instructions the AI model needs to process and respond appropriately.

Let’s break down each field within the `OpenAIParameters` struct and understand its purpose:

model: Specifies the particular OpenAI model in use. For instance, `gpt-3.5-turbo` is a versatile model suitable for various applications.

messages: An array of `OpenAIMessage` objects storing the conversation history. Each message records both user inputs (`role`: "user") and the model’s responses (`role`: "assistant"), maintaining context and coherence.

functions: An array of `Function` objects defining custom functions that your application can call. It includes the function’s name, description, and parameters.

function_call: Dictates how the AI model should handle function calls. Setting it to “auto” allows the model to decide the optimal time to call functions.

max_tokens: Limits the number of tokens (words and characters) the model generates in its response, helping manage reply length and API usage costs.

Here’s a Swift code snippet that illustrates the foundational setup:

struct OpenAIParameters: Codable {

let model: String

let messages: [OpenAIMessage]

let functions: [Function]

let function_call: String

let max_tokens: Int

}

struct OpenAIMessage: Codable {

let role: String

let content: String?

let function_call: FunctionCall?

let name: String?

}

struct Function: Codable {

let name: String

let description: String

let parameters: Parameters

}

struct Parameters: Codable {

let type: String

let properties: [String: Property]

let required: [String]

}

struct Property: Codable {

let type: String

let description: String?

}This structure ensures the data you send to the OpenAI API is well-organized and understandable, laying the groundwork for successful interactions.

Defining Functions with Structs

With the `OpenAIParameters` structure in place, the next step is to define custom functions that the OpenAI model can invoke. Each `Function` struct includes:

name: A unique identifier for the function.

description: A human-readable explanation of the function’s purpose.

parameters: Defines the function's inputs, detailed using a `Parameters` struct that specifies types, properties, and required fields.

Example: Defining a Weather Function

struct Function: Codable {

let name: String

let description: String

let parameters: Parameters

}

struct Parameters: Codable {

let type: String // should typically be "object" as we are defining a JSON object.

let properties: [String: Property]

let required: [String]

}

struct Property: Codable {

let type: String

let description: String?

}

// Defining the `getCurrentWeather` function

let getCurrentWeatherFunction = Function(

name: "get_current_weather",

description: "Get the current weather for a given location",

parameters: Parameters(

type: "object",

properties: [

"location": Property(type: "string", description: "The location to fetch the weather for, usually a city name or coordinates."),

"unit": Property(type: "string", description: "The unit to use for temperature measurement (optional, defaults to Celsius).")

],

required: ["location"]

)

)By defining functions clearly, you provide precise instructions for the AI, enabling more interactive and sophisticated conversations.

Encapsulating Request Logic in OpenAIService Class

Centralizing API request logic within a service class can streamline the process of managing OpenAI interactions. The OpenAIService class handles the entire lifecycle of interacting with the OpenAI API.

Key Components of `OpenAIService`

1. Initialization:

class OpenAIService {

let baseUrl = “https://api.openai.com/v1/chat/completions"

var isLoading: Bool = false

var messages: [OpenAIMessage] = []

}2. Sending Requests:

func makeRequest(message: OpenAIMessage) -> AnyPublisher<OpenAIResponse, Error> {

messages.append(message)

let functions: [Function] = [getCurrentWeatherFunction] // Include our defined functions

let parameters = OpenAIParameters(

model: "gpt-3.5-turbo-0613",

messages: messages,

functions: functions,

function_call: "auto",

max_tokens: 256

)

let headers: HTTPHeaders = ["Authorization" : "Bearer \(Constants.OpenAIAPIKey)"]

// Networking logic using Alamofire and Combine

return Future { [weak self] promise in

self?.performNetworkRequest(with: parameters, headers: headers, promise: promise)

}

.eraseToAnyPublisher()

}

private func performNetworkRequest(with parameters: OpenAIParameters,

headers: HTTPHeaders,

promise: @escaping (Result<OpenAIResponse, Error>) -> Void) {

AF.request(baseUrl,

method: .post,

parameters: parameters,

encoder: .json,

headers: headers

)

.validate() // Ensures we only proceed with valid HTTP responses

.responseDecodable(of: OpenAIResponse.self) { response in

switch response.result {

case .success(let result):

promise(.success(result))

case .failure(let error):

promise(.failure(error))

}

}

}3. Handling Function Calls:

func handleFunctionCall(functionCall: FunctionCall, completion: @escaping (Result<String, Error>) -> Void) {

self.messages.append(OpenAIMessage(role: "assistant", content: "", function_call: functionCall))

// Map the function name to the actual function implementation

let availableFunctions: [String: (String, String?) -> String] = ["get_current_weather": getCurrentWeather]

// Attempt to execute the named function with provided arguments

if let functionToCall = availableFunctions[functionCall.name],

let jsonData = functionCall.arguments.data(using: .utf8) {

do {

let arguments = try JSONDecoder().decode(Arguments.self, from: jsonData)

let functionResponse = functionToCall(arguments.location, arguments.unit)

completion(.success(functionResponse))

} catch {

completion(.failure(error))

}

} else {

let error = NSError(domain: "", code: 0, userInfo: [NSLocalizedDescriptionKey: "Function not found or supported."])

completion(.failure(error))

}

}

// Example dummy function hard coded to return the same weather

// In production, this could be your backend API or an external API

func getCurrentWeather(location: String, unit: String?) -> String {

let weatherInfo: [String: Any] = [

"location": location,

"temperature": "72",

"unit": unit ?? "fahrenheit",

"forecast": ["sunny", "windy"],

]

let jsonData = try? JSONSerialization.data(withJSONObject: weatherInfo, options: .prettyPrinted)

return String(data: jsonData!, encoding: .utf8)!

}Integrating OpenAI Functionality in Swift UI Views

The `ChatViewModel` bridges `OpenAIService` with SwiftUI views, managing message sending, response handling, and UI updates.

ChatViewModel Implementation:

class ChatViewModel: ObservableObject {

// Store active subscriptions

private var cancellables = Set<AnyCancellable>()

// Published properties allow the view to react to changes

@Published var chatMessages: [ChatMessage] = []

@Published var lastMessageID: String = ""

let openAIService: OpenAIService

init(openAIService: OpenAIService = OpenAIService()) {

self.openAIService = openAIService

}

func sendMessage (message: String) {

guard message != "" else {return}

let myMessage = ChatMessage(id: UUID().uuidString, content: message, createdAt: Date(), sender: .me)

chatMessages.append(myMessage)

lastMessageID = myMessage.id

openAIService.makeRequest(message: OpenAIMessage(role: "user", content: message))

.sink { completion in

/// - Handle Error here

switch completion {

case .failure(let error): print(error.localizedDescription)

case .finished: break

}

} receiveValue: { response in

self.handleResponse(response: response)

}

.store(in: &cancellables)

}

func handleResponse(response: OpenAIResponse) {

guard let message = response.choices.first?.message else { return }

// Extract the message from the response

// If it includes a function call, we handle the function call separately

// Otherwise, we append a standard chat message to our `chatMessages`

if let functionCall = message.function_call {

handleFunctionCall(functionCall: functionCall)

chatMessages.append(ChatMessage(id: response.id, content: "Calling function \(functionCall.name)", createdAt: Date(), sender: .chatGPT))

} else if let textResponse = message.content?.trimmingCharacters(in: .whitespacesAndNewlines.union(.init(charactersIn: "\""))) {

chatMessages.append(ChatMessage(id: response.id, content: textResponse, createdAt: Date(), sender: .chatGPT))

lastMessageID = response.id

}

}

func handleFunctionCall(functionCall: FunctionCall) {

// Call the corresponding function in our service

// Handle the response or error

// Append the function response as a new message to our `chatMessages`

self.openAIService.handleFunctionCall(functionCall: functionCall) { result in

switch result {

case .success(let functionResponse):

self.openAIService.makeRequest(

message: OpenAIMessage(

role: "function",

content: functionResponse,

name: functionCall.name

)

)

.sink(receiveCompletion: { completion in

switch completion {

case .failure(let error): print("error", error)

case .finished: break

}

}, receiveValue: { response in

guard let responseMessage = response.choices.first?.message else {

return

}

guard let textResponse = responseMessage.content?

.trimmingCharacters(in: .whitespacesAndNewlines.union(.init(charactersIn: "\""))) else {return}

let chatGPTMessage = ChatMessage(id: response.id,

content: textResponse,

createdAt: Date(),

sender: .chatGPT

)

self.chatMessages.append(chatGPTMessage)

self.lastMessageID = chatGPTMessage.id

})

.store(in: &self.cancellables)

case .failure(let error):

print(error.localizedDescription)

}

}

}

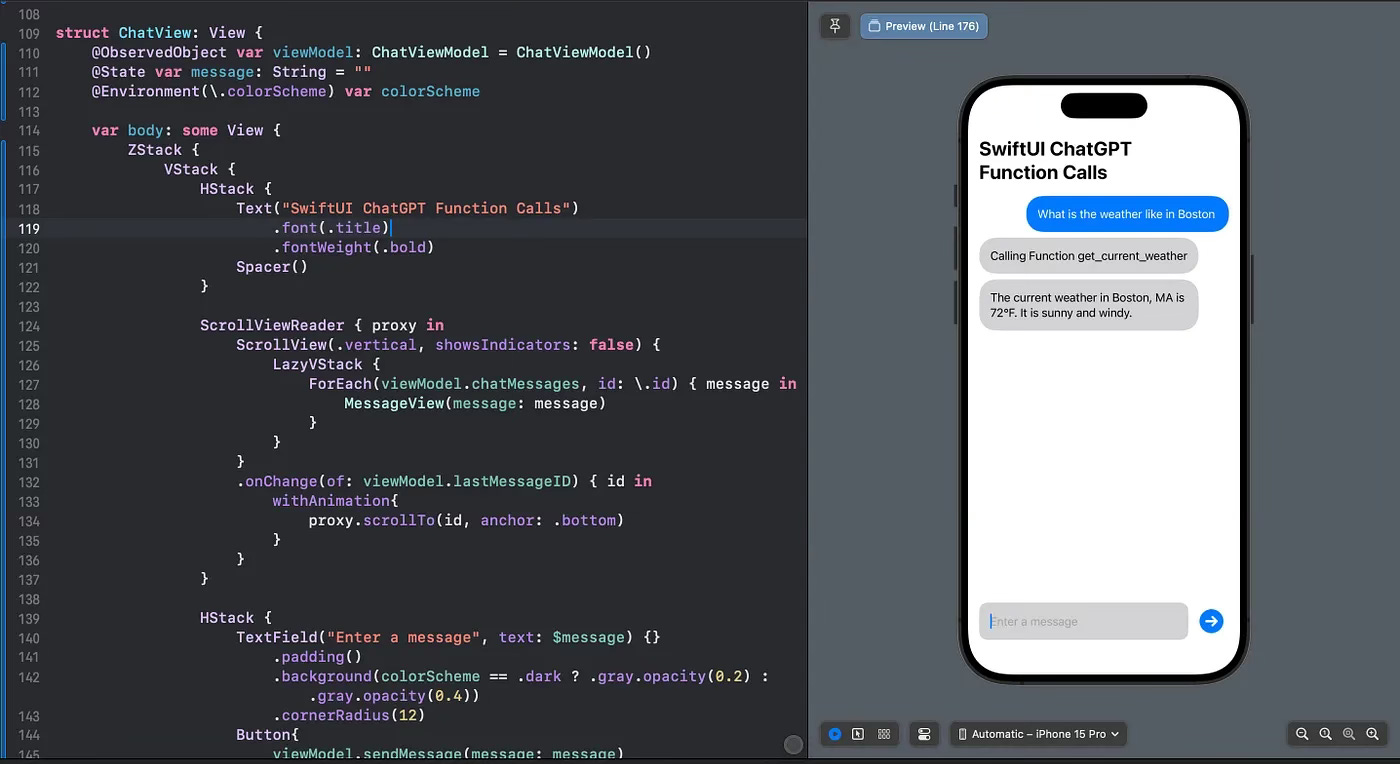

}ChatView:

struct ChatView: View {

@ObservedObject var viewModel: ChatViewModel = ChatViewModel()

@State var message: String = ""

@Environment(\.colorScheme) var colorScheme

var body: some View {

ZStack {

VStack {

HStack {

Text("SwiftUI ChatGPT")

.font(.title)

.fontWeight(.bold)

Spacer()

}

ScrollViewReader { proxy in

ScrollView(.vertical, showsIndicators: false) {

LazyVStack {

ForEach(viewModel.chatMessages, id: \.id) { message in

MessageView(message: message)

}

}

}

.onChange(of: viewModel.lastMessageID) { id in

withAnimation{

proxy.scrollTo(id, anchor: .bottom)

}

}

}

HStack {

TextField("Enter a message", text: $message) {}

.padding()

.background(colorScheme == .dark ? .gray.opacity(0.2) : .gray.opacity(0.4))

.cornerRadius(12)

Button{

viewModel.sendMessage(message: message)

message = ""

} label: {

Image(systemName: "arrow.right.circle.fill")

.foregroundColor(.blue)

.padding(.horizontal, 5)

.font(.largeTitle)

.fontWeight(.semibold)

}

}

}

.padding()

}

}

}Lastly, `MessageView` is a supporting SwiftUI view designed to display an individual chat message:

struct MessageView: View {

var message: ChatMessage

var body: some View {

HStack{

if message.sender == .me{Spacer()}

Text(message.content)

.foregroundColor(message.sender == .me ? .white : nil)

.padding()

.background(message.sender == .me ? .blue : .gray.opacity(0.4))

.cornerRadius(24)

if message.sender == .chatGPT{Spacer()}

}

}

}Conclusion

In this guide, we’ve detailed the process of integrating OpenAI’s API into a Swift application, from setting up request structures and defining functions to managing message flows and implementing function calls. Leveraging Swift’s Codable and Combine frameworks, we've crafted a robust system for interacting with conversational AI, paving the way for engaging, intelligent chat interfaces.

For the complete project and further details, check out the full source code here